Your competition's reputation lives or dies on one thing: do participants trust the results?

Nobody remembers who came second at a well-run event. But everyone remembers the competition where the judging felt rigged, where the criteria changed mid-way, or where one judge's cousin somehow won.

Publish Your Criteria Before Anyone Submits

The most common judging disaster happens when organizers make up criteria as they go. We've seen hackathon judges suddenly decide "team diversity" matters after entries close. We've watched talent shows add "audience engagement" to scoring rubrics mid-performance. The result is always the same: angry participants who feel the rules shifted under them.

Lock your criteria down before the first submission. Then publish them everywhere.

Here's a sample rubric for a hackathon:

| Criterion | Weight | Description |

|---|---|---|

| Functionality | 30% | Core features work as intended |

| Innovation | 25% | Novel approach to the problem |

| Design | 20% | User interface and experience |

| Presentation | 15% | Clear communication of concept |

| Technical Merit | 10% | Code quality and scalability |

Each criterion needs clear scoring levels. Don't ask judges to pick a number between 1 and 10 for "innovation" — define what a 2 looks like versus an 8. Otherwise you'll get one judge who thinks everything deserves a 7 and another who never gives higher than 4.

Bias Creeps In Whether You Want It To

Three to five judges works best. A single judge's weird preferences get smoothed out across a panel.

Where possible, hide identifying information from judges. You'd be surprised how much institutional affiliation affects scoring — a judge from Stanford rates other Stanford entries higher without realizing it. Same goes for demographic factors, past interactions, and personal relationships.

Conflicts of interest need hard rules. A judge shouldn't evaluate entries from their employer, their drinking buddies, or their direct competitors. Write this policy down. Enforce it.

Before judging starts, run a calibration session. Have everyone score the same two practice entries, then compare. When Judge A gives an entry an 8 and Judge B gives it a 4, that's your chance to align interpretations. Skip calibration, and your final rankings will be partly determined by which entries happened to land on which judge's desk.

Pick Judges Who Know the Domain

Your judges need actual expertise. A coding competition judged by marketing executives produces embarrassing results. So does a cooking competition judged by people who've never worked in a kitchen.

Look for varied perspectives within the domain. Five senior developers from the same company will score differently than a panel mixing frontend specialists, backend architects, and product managers. Homogeneous panels produce homogeneous blind spots.

Make sure your judges have time. Rushed judging produces inconsistent scores. A judge evaluating 40 entries in three hours will start rubber-stamping by entry 15.

Weighted Scoring Makes Your Priorities Explicit

Not every criterion matters equally. The World Food Championships weights taste at 50%, execution at 35%, and appearance at 15%. That weighting tells contestants exactly what to optimize for.

Decide weights before competition day. Communicate them to participants in advance. And never — under any circumstances — adjust weights after judging begins. We've seen organizers tweak weights to push a preferred entry up the rankings. Participants notice. Word spreads.

Use software to calculate weighted totals. Manual math introduces errors, and errors that happen to favor certain contestants look suspicious even when they're innocent mistakes.

Decide Tie-Breakers Before You Need Them

Ties happen more than you'd expect, especially when judges cluster around the same scores. Making up tie-breaking rules after a tie occurs invites accusations of favoritism.

Pick your method in advance: the entry with the higher score in the most important criterion wins, earlier submission time wins, or a head-to-head review by a subset of judges. Document it in your competition rules. When the tie happens, you just execute the procedure.

Tell Participants Everything

Trust comes from transparency. Before judging, share your criteria, weights, judge qualifications, scoring methodology, and timeline. During judging, post progress updates if the event spans multiple days. After judging, give participants their scores — at minimum, their own results, ideally with judge feedback.

The organizers who hide scores create suspicion. The organizers who publish everything build reputations that attract better participants next year.

Score Judge Handles the Mechanics

Running judging with spreadsheets creates opportunities for errors. Copying scores from paper forms to Excel, calculating weighted averages by hand, tracking which judge scored which entry — it's tedious and error-prone.

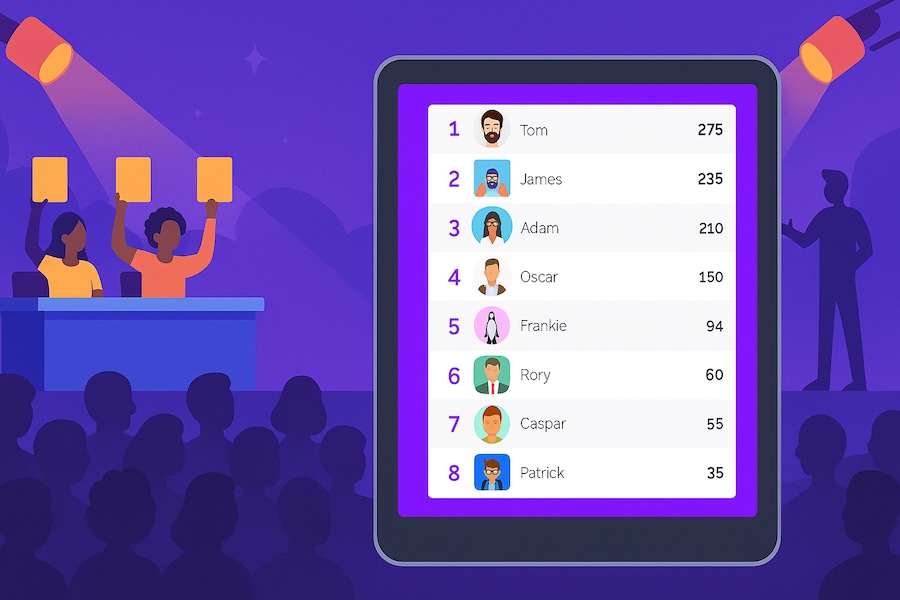

Score Judge lets multiple judges score from their own devices while results aggregate automatically. You set up your rubric once. Every judge uses the same framework. Weighted scoring and tie-breaking apply without manual calculation. Timestamps log every score for accountability.

Participants can watch the leaderboard update in real-time. That transparency removes the black-box feeling that makes people distrust results.

Create your competition and see how it works.

Running a competition soon? Get started with Score Judge and give your participants the fair, transparent experience they deserve.