Most pitch competition judging goes wrong in the same three ways: the criteria are vague, judges calibrate differently, and the final results feel like a black box to everyone who didn't win.

None of this is hard to fix. But you have to set things up before the event, not during it.

Build Your Rubric Before You Open Applications

The rubric is the contract between you and your participants. If you're still adjusting criteria on event day, you've already broken that contract.

A workable rubric for most startup pitch competitions looks something like this:

| Criterion | Weight | What judges are actually evaluating |

|---|---|---|

| Business Model | 25% | Is there a clear path to revenue? Does the unit economics make sense? |

| Market Opportunity | 25% | Is the problem real and the addressable market large enough to matter? |

| Team | 25% | Does this team have the skills and domain knowledge to execute? |

| Traction & Validation | 15% | Have they tested this with real customers? What did they learn? |

| Presentation | 10% | Could they explain this to an investor cold? Were they credible? |

Don't go above five criteria. Past five, judges start losing track of what they're evaluating, and the scores compress into noise.

Fix your weights before you publish the competition rules. Changing them after teams have built their pitches around your stated priorities is not fair — and participants will notice.

Your Judges Need a Calibration Session

One judge's "7" is another judge's "4." This isn't a character flaw — it's how humans work. Without calibration, your results will reflect scoring style as much as pitch quality.

Before judging starts, have all judges score the same two practice pitches. Then compare scores openly. When Judge A gives a team an 8 on Business Model and Judge B gives it a 3, that's the conversation you want to have before it affects real results. Align on what a strong business model looks like versus a weak one. It takes 20 minutes and eliminates the most common source of unfair outcomes.

A few other things worth handling upfront:

Conflicts of interest. Write a policy and enforce it. A judge shouldn't score their portfolio company, their employer's team, or anyone they've previously advised. If you discover a conflict mid-event, remove that judge's scores from the affected entry — don't average them in with a footnote.

Scoring range. Tell judges explicitly whether to use the full scale. Some judges never give a 10 (or a 1). If your scale is 1–10 and most scores land between 6 and 8, the differentiation collapses. It helps to define what the extremes mean: "1 means the team clearly doesn't understand their market" and "10 means this could be a Series A pitch tomorrow."

Three to Five Judges Is the Right Panel Size

One judge is a single opinion. Two judges create tie situations with no resolution. Three to five judges gives you enough diversity to smooth out individual biases without turning deliberations into a committee exercise.

For judges, look for varied perspectives within the domain. Five repeat founders from the same city will have overlapping blind spots. A panel mixing an operator, a VC, and a domain expert from the industry you're targeting will surface better signal.

Don't ask judges to evaluate things they can't evaluate. A technical judge who's never managed a sales team shouldn't be scoring go-to-market strategies heavily. Either weight criteria to their strengths or pick generalist judges for generalist competitions.

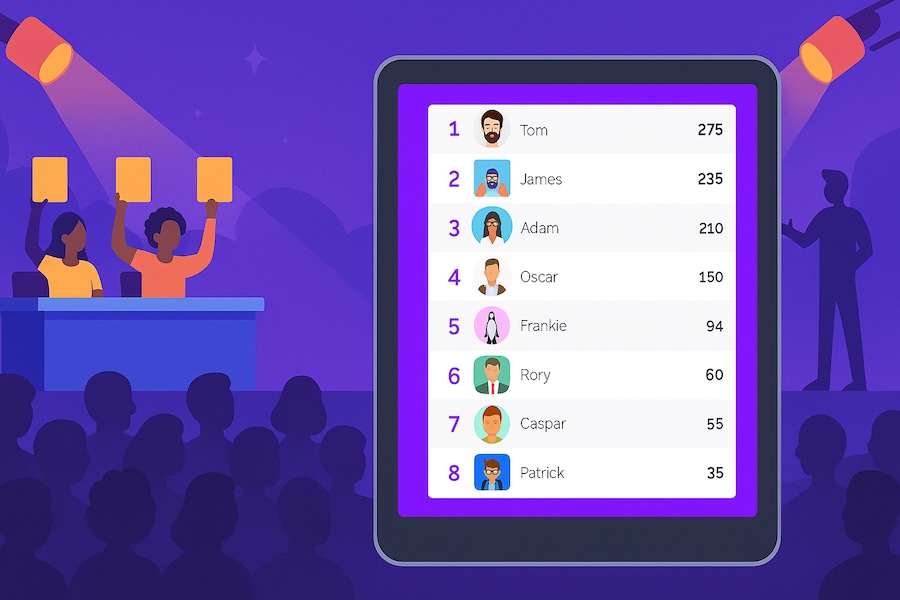

Handle the Live Leaderboard Question Before the Event

Some organizers display running scores throughout the day. Others reveal results only at the end. Both approaches are defensible — but they change the dynamics significantly.

Showing live scores keeps audiences and participants engaged. It also creates pressure on judges to be consistent, which isn't entirely bad. The downside: later pitches carry a disadvantage if judges calibrate higher after seeing early scores, and teams who see they're losing may pitch differently in subsequent rounds.

Revealing scores only at the end eliminates those dynamics but removes the excitement of watching rankings shift. For most events, the live leaderboard wins on audience engagement — just make sure your rubric is locked before you turn it on.

What to Do When Judges Can't Agree

Disagreement between judges isn't a failure — it's information. If two judges are 5 points apart on a pitch, one of them may have domain knowledge the other lacks, or they may genuinely be evaluating different things.

For final rankings, use the average across judges. Don't drop outlier scores unless you have a principled reason to (and have stated that policy in advance). Dropping the "highest and lowest" sounds fair in theory but effectively reduces your panel size to n-2 and can be gamed by teams who know one judge likes them.

For ties: set a tiebreaker policy before the event and publish it. The cleanest options are "highest score on the most-weighted criterion wins" or "earlier submission time wins." Making it up after a tie occurs invites accusations of favoritism, even if none exists.

Use Software for the Calculation, Not a Spreadsheet

Weighted averages across 6 judges and 20 teams across 5 criteria is arithmetic a spreadsheet handles fine in theory. In practice, you'll be copying scores from paper forms or email threads, making manual errors at the worst possible time, and explaining to the second-place team why the CSV you're looking at doesn't match the announced results.

Score Judge handles the mechanics: judges each get their own private scoring link, weighted totals calculate automatically, and the live leaderboard updates as scores come in. You set up the rubric once before the event. Timestamps log every score, so you have a full audit trail if a result gets challenged.

It's free for competitions up to a certain size. Create your competition and you'll have the setup done in under 10 minutes.

One thing worth saying plainly: the best judging doesn't guarantee the best outcome for participants. Criteria favor certain business types. Judges have patterns. A fintech pitch in front of five SaaS investors will be evaluated differently than the same pitch in front of fintech practitioners.

Be transparent about who your judges are and what they're optimizing for. Teams who pitch to the wrong panel don't need better pitches — they need a better-matched competition. Publishing judge bios and being honest about the audience you're building helps everyone select the right event.

For general competition judging principles, see how to judge a competition fairly.